[Progressive schools] as compared with traditional schools [display] a common emphasis upon respect for individuality and for increased freedom; a common disposition to build upon the nature and experience of the boys and girls common to them, instead of imposing from without external subject-matter and standards. They all display a certain atmosphere of informality, because experience has proved that formalization is hostile to genuine mental activity and to sincere emotional expression and growth. Emphasis upon activity as distinct from passivity is one of the common factors….[There is] unusual attention to …normal human relations, to communication …which is like in kind to that which is found in the great world beyond the school doors.

John Dewey, 1928

Were John Dewey alive in 2016 and had he joined me in a brief visit to the AltSchool on October 20, 2016, he would, I believe, nodded in agreement with what he saw on that fall day and affirmed what he said when he became honorary president of the Progressive Education Association in 1928.

The AltSchool embodies many of the principles of progressive education from nearly a century ago–as do other schools in the U.S. Just as Dewey’s Lab School at the University of Chicago (1896-1904) became a hothouse experiment as a private school, so has the AltSchool and its network of “micro-schools” in the Bay area and New York City over the past five years (see here, here, here, and here). Progressive schools, then and now, varied greatly yet champions of such schools from Dewey to Francis Parker to Jesse Newlon to Alt/School’s Max Ventilla believed they were already or about to become “good” schools.

One major difference, however, between progressives then and now were the current technologies. Unknown to Dewey and his followers in the early 20th century, new technologies have become married to these progressive principles in ways that reflect both wings of the earlier reform movement (see here).

In this post, I want to describe what I saw that morning in classrooms–sadly without the company of Dewey–and what I heard from the founder of the AltSchool network, Max Ventilla.

Alt/Schools

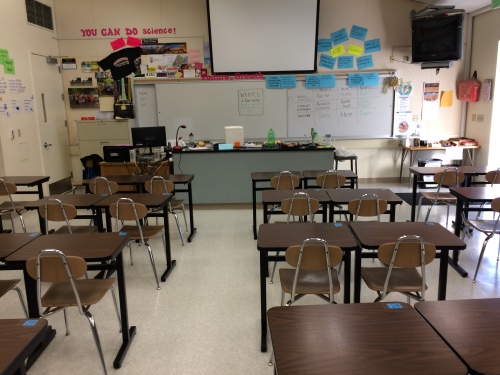

There are five “micro-schools” in San Francisco. I visited Yerba Buena, a K-8 school of over 30 students whose daily schedule gives a hint of what it is about. I went unescorted into three classes –upper-elementary and middle school social studies and math lessons (primary classes were on a field trip to a museum)–which gave me a taste of the teaching, the content, student participation, and the level of technology integration. I spoke briefly with two of the three teachers whose lessons I observed and got a flavor of their enthusiasm for their students and the school.

For readers who want a larger slice of what this private school seeks to do (tuition runs around $26,000 for 2015-2016) can see video clips and read text about the philosophy, program, teaching staff, and the close linkages between technology in this and sister “micro-schools” (see Alt/school materials here)

Since I parachuted in for a few hours–I plan to see another “micro-school” soon–I cannot describe full lessons, the entire program, teaching staff or even offer an informed opinion of Yerba Buena. For those readers who want such descriptions (and judgments), there are journalistic accounts (see above) and the AltSchool’s own descriptions for parents (see above).

Yet what was clear to me even in the morning’s glimpse of a “micro-school” was that theoretical principles of Deweyan thought and practice in his Lab School over a century ago and the evolving network of both private and public progressive schools in subsequent decades across the nation was apparent in what I saw in a few classrooms at Yerba Buena. One doesn’t need a weather vane to see which way the wind is blowing.

But there was a modern twist and a new element in the progressive portfolio of practices: the ubiquitous use of technology by teachers and students as teaching and learning tools. Unlike most places that have adopted laptops and tablets wholesale, what I saw for a few hours was that the use of new technologies was in the background, not the foreground, of a lesson. Much like pencil and paper have been taken-for-granted tools in both teaching and learning over the past century, so now digital ones.

What I also found useful in looking at a progressive vision of private schooling in practice was my 45-minute talk with the founder of these experimental “micro schools.”

Max Ventilla

The founder of AltSchool has been profiled many times and has given extensive interviews (see here and here). In many of these, the “creation story” of how he and his wife searched for a private school that would meet their five year-old’s needs and potential and then, coming up empty in their search. “We weren’t seeing,” he said, “the kind of experiences that we thought would really prepare her for a lifetime of change.” He decided to build a school that would be customized for individual students, like their daughter, where children could further their intellectual passions while nourishing all that makes a kid, a kid.

In listening to Ventilla, that story was repeated but far more important I got a clearer sense of what he has in mind for Altschool in the upcoming years. Some venture capitalists have invested in the for-profit AltSchool not for a couple of years but for a decade. He sees beyond that horizon, however, for his networks to scale up, becoming more efficient, less costly, and attractive to more and more parents as a progressive brand that will, at some future point, reshape how private and public schools operate. And turn a profit for investors. Ventilla wants to do well by doing good.

His conceptual framework for the network and its eventual growth is a mix of what he learned personally from starting and selling software companies and working at Google in personalizing users’ search results to increase consumer purchases (see here). Ventilla sees the half-dozen or more “micro-schools” in different cities as part of a long-term research-and-development strategy that would build networks of small schools as AltSchool designers, software engineers, and teachers learn from their mistakes. As they slowly get larger, key features of AltSchool–building personalized learning platforms, for example–will be licensed to private (see here) and eventually public schools.

Ventilla mixes the language of whole child development, individual differences, the importance of collaboration among children and between children and adults with business ideas and vocabulary of “soft vs. hard technology,” “crossing the threshold of efficacy,” “effects per costs,” and scaling up networks to eventually become profitable.

Progressivism–both wings (see Part 1) are present in AltSchool’s collecting huge amounts of data about students and engineers (on site) with teachers using that data to create customized playlists for each of their K-8 students across all subject areas . Efficiency and effectiveness are married to progressive principles in practice.

That is the dream that I heard from Max Ventilla one October morning.

Part 2 will describe my visit to a nearby micro-school, South-of-Market (SOMA) where 33 middle school students (6th through 8th graders) attend.